MPI: Difference between revisions

(→What is MPI?: copyediting, mostly) |

|||

| Line 24: | Line 24: | ||

MPI is an open, non-proprietary standard so an MPI program can easily be ported to many different computers. Applications that use it can be run on a large number of processors at once, often with good efficiency (called "scalability"). And because memory is local to each process some aspects of debugging are simplified --- it isn't possible for one process to interfere with the memory of another, and if a program generates a segmentation fault the resulting core file can be processed by standard serial debugging tools. However, due to the need to manage communication and synchronization explicitly, MPI programs may appear more complex than programs written with tools that support implicit communication. Furthermore, in designing an MPI program one should take care to minimize communication overhead in order that it not overwhelm the speed-up gained from parallel computation. | MPI is an open, non-proprietary standard so an MPI program can easily be ported to many different computers. Applications that use it can be run on a large number of processors at once, often with good efficiency (called "scalability"). And because memory is local to each process some aspects of debugging are simplified --- it isn't possible for one process to interfere with the memory of another, and if a program generates a segmentation fault the resulting core file can be processed by standard serial debugging tools. However, due to the need to manage communication and synchronization explicitly, MPI programs may appear more complex than programs written with tools that support implicit communication. Furthermore, in designing an MPI program one should take care to minimize communication overhead in order that it not overwhelm the speed-up gained from parallel computation. | ||

In the following we will attempt to highlight a few of these issues and discuss strategies to deal with them. Suggested references are presented at the end of this tutorial and the reader is encouraged to consult them for additional information. | |||

== MPI Programming Basics == | == MPI Programming Basics == | ||

Revision as of 19:08, 22 September 2016

A Primer on Parallel Programming[edit]

To pull a bigger wagon it is easier to add more oxen that to find (or build) a bigger ox.

—Gropp, Lusk & Skjellum, Using MPI

In order to build a house as quickly as possible we do not look to a faster person to do all the construction more quickly, we use many people and spread the work among them so that tasks are being performed at the same time --- "in parallel". Computational problems are similar. There is a limit to how fast a single machine can work, so we attempt to divide up the problem and assign work to be completed concurrently to multiple computers.

The most significant concept to master in designing and building parallel applications is communication. Complexity arises due to communication requirements. In order for multiple workers to accomplish a task in parallel, they need to be able to communicate with one another. In the context of software, we have many processes each working on part of a solution, needing values that were computed---or are yet to be computed!---by other processes.

There are two major models of computational parallelism: shared memory, and distributed memory.

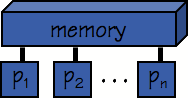

In shared memory parallelism (commonly and casually abbreviated SMP) all of processors see the same memory image, or to put it another way, all memory is globally addressable. Communication between processes on an SMP machine is implicit --- any process can read and write values to memory that can be subsequently manipulated directly by others. The challenge in writing these kinds of programs is data consistency: one must take steps to ensure data is not modified by more than one processor at a time.

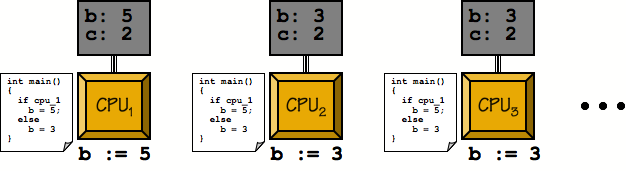

Distributed memory parallelism is equivalent to a collection of workstations linked by a dedicated network for communication: a cluster. In this model, processes each have their own private memory, and may run on physically distinct machines. When processes need to communicate, they do so by sending messages. A process typically invokes a function to send data and the destination process invokes a function to receive it. A major challenge in distributed memory programming is how to minimize communication overhead. Networks, even the fastest dedicated hardware interconnects, transmit data orders of magnitude slower than within a single machine. Memory access times are typically measured in ones to hundreds of nanoseconds, while network latency is typically expressed in microseconds.

The remainder of this tutorial will consider distributed memory programming on a cluster using the Message Passing Interface.

What is MPI?[edit]

The Message Passing Interface (MPI) is, strictly speaking, a standard describing a set of subroutines, functions, objects, etc., with which one can write parallel programs. Many different implementations of the standard have been produced, such as Open MPI, MPICH, and MVAPICH. The standard describes how MPI should be called from Fortran, C, and C++ languages, but unofficial "bindings" can be found for several other languages.

MPI is an open, non-proprietary standard so an MPI program can easily be ported to many different computers. Applications that use it can be run on a large number of processors at once, often with good efficiency (called "scalability"). And because memory is local to each process some aspects of debugging are simplified --- it isn't possible for one process to interfere with the memory of another, and if a program generates a segmentation fault the resulting core file can be processed by standard serial debugging tools. However, due to the need to manage communication and synchronization explicitly, MPI programs may appear more complex than programs written with tools that support implicit communication. Furthermore, in designing an MPI program one should take care to minimize communication overhead in order that it not overwhelm the speed-up gained from parallel computation.

In the following we will attempt to highlight a few of these issues and discuss strategies to deal with them. Suggested references are presented at the end of this tutorial and the reader is encouraged to consult them for additional information.

MPI Programming Basics[edit]

MPI bindings are defined for Fortran, C and C++. This tutorial will present the development of code in the most commonly used C and Fortran, however these concepts apply directly to whatever language you are using. Function names and constants are standardized, so generalizing this tutorial to another language should be straightforward assuming the programmer is already familiar with the language of interest.

In the interest of simplicity and illustrating key concepts, our goal will be to parallelize the venerable "Hello, World!" program, which appears below for reference.

| C CODE: hello.c | FORTRAN CODE: hello.f |

|---|---|

#include <stdio.h>

int main()

{

printf("Hello, world!\n");

return(0);

}

|

program hello

print *, 'Hello, world!'

end program hello

|

When compiled and run as shown in the image below, the output of this program looks something like this:

[orc-login1 ~]$ vi hello.c [orc-login1 ~]$ cc -Wall hello.c -o hello [orc-login1 ~]$ ./hello Hello, world!

SPMD Programming[edit]

Parallel programs written using MPI make use of an execution model called Single Program, Multiple Data, or SPMD. Rather than have to write some number of applications that then run in parallel, the SPMD model involves starting up a number of copies of the same program. All running processes in a MPI job are assigned a unique integer identifier, referred to as the rank of the process, and a process can obtain this value at run-time. Where the behaviour of the program diverges on different processors, conditional statements based on the rank of the process are used so that each process executes the appropriate instructions.

Framework[edit]

In order to make use of the MPI facilities, we must include the relevant header file (mpi.h for C/C++, mpif.h for Fortran) and link the MPI library during compilation/linkage. While it is perfectly reasonable to do this all manually, most MPI implementation provide a handy script (C: mpicc, Fortran: mpif90, C++: mpiCC) for compiling that handles all set-up issues with respect to include and library directories, appropriate library linkage, etc. Our examples will all use this script, and it is recommended that you do the same barring some issue that requires you do it manually.

Note that other than the MPI library we linked to our executable, there is nothing coordinating the activities of the running programs. This is performed cooperatively inside the MPI library of the running processes. We are thus required to explicitly initialize this process by calling an initialization function before we make use of any MPI features in our code. The prototype for this function appears below:

| C API | FORTRAN API |

|---|---|

int MPI_Init(int *argc, char **argv[]);

|

MPI_INIT(IERR)

INTEGER :: IERR

|

The arguments to the C function are pointers to the same argc and argv variables that represent the command-line arguments to the program. This is to permit MPI to "fix" the command-line arguments in the case where the job launcher may have modified them. It is worth noting that C MPI functions returns the error status of the function explicitly; All Fortran functions take an additional argument, IERR in which it records the error status of the function before it returns.

Similarly, we must call a function to allow the library to do any clean-up that might be required before our program exits. The prototype for this function, which takes no arguments other than the error parameter in Fortran, appears below:

| C API | FORTRAN API |

|---|---|

int MPI_Finalize(void);

|

MPI_FINALIZE(IERR)

INTEGER :: IERR

|

As a rule of thumb, it is a good idea to perform the initialization as the first statement of our program, and the finalize operation as the last statement before program termination. Let's take a moment and modify our Hello, world! program to take these basic issues into consideration.

| C CODE: phello0.c | FORTRAN CODE: phello0.f |

|---|---|

#include <stdio.h>

#include <mpi.h>

int main(int argc, char *argv[])

{

MPI_Init(&argc, &argv);

printf("Hello, world!\n");

MPI_Finalize();

return(0);

}

|

program phello0

include "mpif.h"

integer :: ierror

call MPI_INIT(ierror)

print *, 'Hello, world!'

call MPI_FINALIZE(ierror)

end program phello0

|

Rank and Size[edit]

While it is now possible to run this program under control of MPI, each process will still just output the original string which isn't very interesting. Let's begin by having each process output its rank and how many processes are running in total. This information is obtained at run-time by the use of the following functions.

| C API | FORTRAN API |

|---|---|

int MPI_Comm_size(MPI_Comm comm, int *nproc);

int MPI_Comm_rank(MPI_Comm comm, int *myrank);

|

MPI_COMM_SIZE(COMM, NPROC, IERR)

INTEGER :: COMM, NPROC, IERR

MPI_COMM_RANK(COMM, RANK, IERR)

INTEGER :: COMM, RANK, IERR

|

MPI_Comm_size will report the number of processes running as part of this job by assigning it to the result parameter nproc. Similarly, MPI_Comm_rank reports the rank of the calling process to the result parameter myrank. Ranks in MPI start counting from 0 rather than 1, so given N processes we expect the ranks to be 0..(N-1). The comm argument is a communicator, which is a set of processes capable of sending messages to one another. For the purpose of this tutorial we will always pass in the predefined value MPI_COMM_WORLD, which is simply all the processes started with the job (it is possible to define and use your own communicators, however that is beyond the scope of this tutorial and the reader is referred to the provided references for additional detail).

Let us incorporate these functions into our program, and have each process output its rank and size information. Note that since we are still having all processes perform the same function, there are no conditional blocks required in the code.

| C CODE: phello1.c | FORTRAN CODE: phello1.f |

|---|---|

#include <stdio.h>

#include <mpi.h>

int main(int argc, char *argv[])

{

int rank, size;

MPI_Init(&argc, &argv);

MPI_Comm_rank(MPI_COMM_WORLD, &rank);

MPI_Comm_size(MPI_COMM_WORLD, &size);

printf("Hello, world! "

"from process %d of %d\n", rank, size);

MPI_Finalize();

return(0);

}

|

program phello1

include "mpif.h"

integer :: rank, size, ierror

call MPI_INIT(ierror)

call MPI_COMM_SIZE(MPI_COMM_WORLD, size, ierror)

call MPI_COMM_RANK(MPI_COMM_WORLD, rank, ierror)

print *, 'Hello from process ', rank, ' of ', size

call MPI_FINALIZE(ierror)

end program phello1

|

Compile and run this program on 2, 4 and 8 processors. Note that each running process produces output based on the values of its local variables, as one should expect. As you run the program on more processors, you will start to see that the order of the output from the different processes is not regular. The stdout of all running processes is simply concatenated together; you should make no assumptions about the order of output from different processes.

[orc-login2 ~]$ vi phello1.c [orc-login2 ~]$ mpicc -Wall phello1.c -o phello1 [orc-login2 ~]$ mpirun -np 4 ./phello1 Hello, world! from process 0 of 4 Hello, world! from process 1 of 4 Hello, world! from process 2 of 4 Hello, world! from process 3 of 4

Communication[edit]

While we now have a parallel version of our "Hello, World!" program, it isn't a very interesting one as there is no communication between the processes. Let's explore this issue by having the processes say hello to one another, rather than just sending the output to stdout.

The specific functionality we seek is to have each process send its hello message to the "next" one in the communicator. We will define this as a rotation operation, so process rank i is to send its message to process rank i+1, with process N-1 wrapping around and sending to process 0; That is to say process i sends to process (i+1)%N, where there are N processes and % is the modulus operator.

First we need to learn how to send and receive data using MPI. While MPI provides means of sending and receiving data of any composition, any consideration of such issues is too advanced for this tutorial and we'll refer again to the provided references for additional information. The most basic way of organizing data to be sent is to send a sequence of one or more instances of an atomic data type. This is supported natively in languages with contiguous allocation of arrays.

Data is sent using the MPI_Send function. Referring to the following function prototypes, MPI_Send can be summarized as sending count contiguous instances of datatype to process with the specified rank, and the data is in the buffer pointed to by message. tag is a programmer-specified identifier that becomes associated with the message, and can be used to organize the communication process (for example, to distinguish two distinct streams of data interleaved piecemeal). None of our examples require we use this functionality, so we will always pass in the value 0 for this parameter. comm is again the communicator in which the send occurs, for which we will continue to use the pre-defined MPI_COMM_WORLD.

| C API |

|---|

int MPI_Send

(

void *message, /* reference to data to be sent */

int count, /* number of items in message */

MPI_Datatype datatype, /* type of item in message */

int dest, /* rank of process to receive message */

int tag, /* programmer specified identifier */

MPI_Comm comm /* communicator */

);

|

| FORTRAN API |

|---|

MPI_SEND(MESSAGE, COUNT, DATATYPE, DEST, TAG, COMM, IERR)

<type> MESSAGE(*)

INTEGER :: COUNT, DATATYPE, DEST, TAG, COMM, IERR

|

Note that the datatype argument, specifying the type of data contained in the message buffer, is a variable. This is intended to provide a layer of compatibility between processes that could be running on architectures for which the native format for these types differs. For more complex situations it is possible to register new data types, however for the purpose of this tutorial we will restrict ourselves to the pre-defined types provided by MPI. There is an MPI type pre-defined for all atomic data types in the source language (for C: MPI_CHAR, MPI_FLOAT, MPI_SHORT, MPI_INT, etc. and for Fortran: MPI_CHARACTER, MPI_INTEGER, MPI_REAL, etc.). Please refer to the provided references for a full list of these types if interested.

We can summarize the MPI_Recv call relatively quickly now as the function works in much the same way. Referring to the function prototypes below, message is now a pointer to an allocated buffer of sufficient size to receive count contiguous instances of datatype, which is received from process rank. MPI_Recv takes one additional argument, status, which is a reference to an allocated MPI_Status structure in C and an array of MPI_STATUS_SIZE integers in Fortran. It will be filled in with information related to the received message upon return. We will not make use of this argument in this tutorial, however it must be present.

| C API |

|---|

int MPI_Recv

(

void *message, /* reference to buffer for received data */

int count, /* number of items to be received */

MPI_Datatype datatype, /* type of item to be received */

int source, /* rank of process from which to receive */

int tag, /* programmer specified identifier */

MPI_Comm comm /* communicator */

MPI_Status *status /* stores info. about received message */

);

|

| FORTRAN API |

|---|

MPI_RECV(MESSAGE, COUNT, DATATYPE, SOURCE, TAG, COMM, STATUS, IERR)

<type> :: MESSAGE(*)

INTEGER :: COUNT, DATATYPE, SOURCE, TAG, COMM, STATUS(MPI_STATUS_SIZE), IERR

|

Keep in mind that both sends and receives are explicit calls. The sending process must know the rank of the process to which it is sending, and the receiving process must know the rank of the process from which to receive. in this case, since all our processes are performing the same action, we need only derive the appropriate arithmetic so that given its rank, the process knows both where to send its data, [(rank + 1) % size], and from which to receive [(rank + size) - 1) % size]. We'll now make the required modifications to our parallel "Hello, world!" program. As a first cut, we'll simply have each process first send its message, then receive what is being sent to it.

| C CODE: phello2.c |

|---|

#include <stdio.h>

#include <mpi.h>

#define BUFMAX 81

int main(int argc, char *argv[])

{

char outbuf[BUFMAX], inbuf[BUFMAX];

int rank, size;

int sendto, recvfrom;

MPI_Status status;

MPI_Init(&argc, &argv);

MPI_Comm_rank(MPI_COMM_WORLD, &rank);

MPI_Comm_size(MPI_COMM_WORLD, &size);

sprintf(outbuf, "Hello, world! from process %d of %d", rank, size);

sendto = (rank + 1) % size;

recvfrom = ((rank + size) - 1) % size;

MPI_Send(outbuf, BUFMAX, MPI_CHAR, sendto, 0, MPI_COMM_WORLD);

MPI_Recv(inbuf, BUFMAX, MPI_CHAR, recvfrom, 0, MPI_COMM_WORLD, &status);

printf("[P_%d] process %d said: \"%s\"]\n", rank, recvfrom, inbuf);

MPI_Finalize();

return(0);

}

|

| FORTRAN CODE: phello2.f |

|---|

program phello2

implicit none

include 'mpif.h'

integer, parameter :: BUFMAX=81

character(len=BUFMAX) :: outbuf, inbuf, tmp

integer :: rank, num_procs, ierr

integer :: sendto, recvfrom

integer :: status(MPI_STATUS_SIZE)

call MPI_INIT(ierr)

call MPI_COMM_RANK(MPI_COMM_WORLD, rank, ierr)

call MPI_COMM_SIZE(MPI_COMM_WORLD, num_procs, ierr)

outbuf = 'Hello, world! from process '

write(tmp,'(i2)') rank

outbuf = outbuf(1:len_trim(outbuf)) // tmp(1:len_trim(tmp))

write(tmp,'(i2)') num_procs

outbuf = outbuf(1:len_trim(outbuf)) // ' of ' // tmp(1:len_trim(tmp))

sendto = mod((rank + 1), num_procs)

recvfrom = mod(((rank + num_procs) - 1), num_procs)

call MPI_SEND(outbuf, BUFMAX, MPI_CHARACTER, sendto, 0, MPI_COMM_WORLD, ierr)

call MPI_RECV(inbuf, BUFMAX, MPI_CHARACTER, recvfrom, 0, MPI_COMM_WORLD, status, ierr)

print *, 'Process', rank, ': Process', recvfrom, ' said:', inbuf

call MPI_FINALIZE(ierr)

end program phello2

|

Compile and run this program on 2, 4 and 8 processors. While it certainly seems to be working as intended, there is something we are overlooking. The MPI standard says nothing about whether sends are buffered---that is to say, we should not assume that MPI_Send returns immediately buffering our message. If the send isn't buffered, the code as written would deadlock with all processes performing its send and blocking as there are no receives to consume the message until after the send returns. Clearly there is buffering in the libraries on our systems as the code did not deadlock, however it is poor design to rely on this. You may find your code fails if used on a system in which there is no buffering provided by the library, and even where buffering is provided, the call will still block if the buffer fills up.

[orc-login2 ~]$ mpicc -Wall phello2.c -o phello2 [orc-login2 ~]$ mpirun -np 4 ./phello2 [P_0] process 3 said: "Hello, world! from process 3 of 4"] [P_1] process 0 said: "Hello, world! from process 0 of 4"] [P_2] process 1 said: "Hello, world! from process 1 of 4"] [P_3] process 2 said: "Hello, world! from process 2 of 4"]

Safe MPI[edit]

The MPI standard defines MPI_Send and MPI_Recv to be blocking calls. The correct way to interpret this is that MPI_Send will not return until it is safe for the calling module to modify the contents of the provided message buffer. Similarly, MPI_Recv will not return until the entire contents of the message are available in the provided message buffer.

It should be obvious that the availability of buffering in the MPI library is irrelevant to receive operations. It is meaningless to speak of buffering something that hasn't yet been received. As such we assume that MPI_Recv will always block until the message is fully received. MPI_Send on the other hand need not block if there is buffering present in the library. Once the message is copied out of the buffer for delivery, it is safe for the user to modify the buffer, so the call can return. This is why our parallel "Hello, world!" program doesn't deadlock as we have implemented it, even though all processes call MPI_Send first. This relies on there being buffering in the MPI library on our systems, which is not required by the MPI standard, and thus we refer to such a program as unsafe MPI.

A safe MPI program is one that does not rely on a buffered underlying implementation in order to function correctly. The following pseudo-code fragments illustrate this concept clearly:

Deadlock[edit]

...

if (rank == 0)

{

MPI_Recv(from 1);

MPI_Send(to 1);

}

else if (rank == 1)

{

MPI_Recv(from 0);

MPI_Send(to 0);

}

...

Receives are executed on both processes before the matching send; regardless of buffering the processes in this MPI application will block on the receive calls and deadlock.

Unsafe[edit]

...

if (rank == 0)

{

MPI_Send(to 1);

MPI_Recv(from 1);

}

else if (rank == 1)

{

MPI_Send(to 0);

MPI_Recv(from 0);

}

...

This is essentially what our parallel "Hello, world!" program was doing, and in general this may work if buffering is provided by the library. If the library is unbuffered, or even if messages are simply sufficiently large to fill the buffer, this code will block on the sends, and deadlock.

Safe[edit]

...

if (rank == 0)

{

MPI_Send(to 1);

MPI_Recv(from 1);

}

else if (rank == 1)

{

MPI_Recv(from 0);

MPI_Send(to 0);

}

...

Even in the absence of buffering, the send here is paired with a corresponding receive between processes. While a process may block for a short time until the corresponding call is made, it is not possible for deadlock to occur in this code regardless of buffering.

The last thing we will consider then is how to recast our "Hello, World!" program so that it is safe. A common solution to this kind of data-exchange issue, where all processes are exchanging data with a subset of other processes, is to adopt an odd-even pairing and perform the communication in two steps. In this case communication is a rotation of data one rank to the right, so we end up with a safe program if all even ranked processes execute a send followed by a receive, while all odd ranked processes execute a receive followed by a send. The reader can verify that the sends and receives are properly paired avoiding any possibility of deadlock.

| C CODE: phello3.c |

|---|

#include <stdio.h>

#include <mpi.h>

#define BUFMAX 81

int main(int argc, char *argv[])

{

char outbuf[BUFMAX], inbuf[BUFMAX];

int rank, size;

int sendto, recvfrom;

MPI_Status status;

MPI_Init(&argc, &argv);

MPI_Comm_rank(MPI_COMM_WORLD, &rank);

MPI_Comm_size(MPI_COMM_WORLD, &size);

sprintf(outbuf, "Hello, world! from process %d of %d", rank, size);

sendto = (rank + 1) % size;

recvfrom = ((rank + size) - 1) % size;

if (!(rank % 2))

{

MPI_Send(outbuf, BUFMAX, MPI_CHAR, sendto, 0, MPI_COMM_WORLD);

MPI_Recv(inbuf, BUFMAX, MPI_CHAR, recvfrom, 0, MPI_COMM_WORLD, &status);

}

else

{

MPI_Recv(inbuf, BUFMAX, MPI_CHAR, recvfrom, 0, MPI_COMM_WORLD, &status);

MPI_Send(outbuf, BUFMAX, MPI_CHAR, sendto, 0, MPI_COMM_WORLD);

}

printf("[P_%d] process %d said: \"%s\"]\n", rank, recvfrom, inbuf);

MPI_Finalize();

return(0);

}

|

| FORTRAN CODE: phello3.f |

|---|

program phello3

implicit none

include 'mpif.h'

integer, parameter :: BUFMAX=81

character(len=BUFMAX) :: outbuf, inbuf, tmp

integer :: rank, num_procs, ierr

integer :: sendto, recvfrom

integer :: status(MPI_STATUS_SIZE)

call MPI_INIT(ierr)

call MPI_COMM_RANK(MPI_COMM_WORLD, rank, ierr)

call MPI_COMM_SIZE(MPI_COMM_WORLD, num_procs, ierr)

outbuf = 'Hello, world! from process '

write(tmp,'(i2)') rank

outbuf = outbuf(1:len_trim(outbuf)) // tmp(1:len_trim(tmp))

write(tmp,'(i2)') num_procs

outbuf = outbuf(1:len_trim(outbuf)) // ' of ' // tmp(1:len_trim(tmp))

sendto = mod((rank + 1), num_procs)

recvfrom = mod(((rank + num_procs) - 1), num_procs)

if (MOD(rank,2) == 0) then

call MPI_SEND(outbuf, BUFMAX, MPI_CHARACTER, sendto, 0, MPI_COMM_WORLD, ierr)

call MPI_RECV(inbuf, BUFMAX, MPI_CHARACTER, recvfrom, 0, MPI_COMM_WORLD, status, ierr)

else

call MPI_RECV(inbuf, BUFMAX, MPI_CHARACTER, recvfrom, 0, MPI_COMM_WORLD, status, ierr)

call MPI_SEND(outbuf, BUFMAX, MPI_CHARACTER, sendto, 0, MPI_COMM_WORLD, ierr)

endif

print *, 'Process', rank, ': Process', recvfrom, ' said:', inbuf

call MPI_FINALIZE(ierr)

end program phello3

|

In the interest of completeness, it should be noted that in this implementation, there is the possibility of a blocked MPI_Send where the number of processors is odd---one processor will be sending while another is trying to send to it. Verify for yourself that there can only be one processor affected in this way. This is still safe however since the receiving process was involved in a send that was correctly paired with a receive, so the blocked send will complete as soon as the MPI_Recv call is executed on the receiving process.

[orc-login2 ~]$ vi phello3.c [orc-login2 ~]$ mpicc -Wall phello3.c -o phello3 [orc-login2 ~]$ mpirun -np 16 ./phello3 [P_1] process 0 said: "Hello, world! from process 0 of 16"] [P_2] process 1 said: "Hello, world! from process 1 of 16"] [P_5] process 4 said: "Hello, world! from process 4 of 16"] [P_3] process 2 said: "Hello, world! from process 2 of 16"] [P_9] process 8 said: "Hello, world! from process 8 of 16"] [P_0] process 15 said: "Hello, world! from process 15 of 16"] [P_12] process 11 said: "Hello, world! from process 11 of 16"] [P_6] process 5 said: "Hello, world! from process 5 of 16"] [P_13] process 12 said: "Hello, world! from process 12 of 16"] [P_8] process 7 said: "Hello, world! from process 7 of 16"] [P_7] process 6 said: "Hello, world! from process 6 of 16"] [P_14] process 13 said: "Hello, world! from process 13 of 16"] [P_10] process 9 said: "Hello, world! from process 9 of 16"] [P_4] process 3 said: "Hello, world! from process 3 of 16"] [P_15] process 14 said: "Hello, world! from process 14 of 16"] [P_11] process 10 said: "Hello, world! from process 10 of 16"]

Comments and Further Reading[edit]

This tutorial has presented a brief overview of some of the key syntax, semantics and design concepts associated with MPI programming. There is still a wealth of material to be considered in architecting any serious parallel architecture, including but not limited to:

- MPI_Send/MPI_Recv variants (buffered, non-blocking, synchronous, etc.)

- collective communication/computation operations (reduction, broadcast, barrier, scatter, gather, etc.)

- defining derived data types

- communicators and topologies

- one-sided communication (and MPI-2 in general)

- efficiency issues

- parallel debugging

The following are recommended books and online references for those interested in more detail on the concepts we've discussed in this tutorial, and to continue learning about the more advanced features available to you through the Message Passing Interface.

- William Gropp, Ewing Lusk and Anthony Skjellum. Using MPI: Portable Parallel Programming with the Message-Passing Interface (2e). MIT Press, 1999.

- Comprehensive reference covering Fortran, C and C++ bindings

- Peter S. Pacheco. Parallel Programming with MPI. Morgan Kaufmann, 1997.

- Easy to follow tutorial-style approach in C