CephFS

CephFS provides a common filesystem that can be shared amongst multiple OpenStack VM hosts. Access to the service is granted via requests to cloud@tech.alliancecan.ca.

This is a fairly technical procedure that assumes basic Linux skills for creating/editing files, setting permissions, and creating mount points. For assistance in setting up this service, write to cloud@tech.alliancecan.ca.

Procedure

If you do not already have a quota for the service, you will need to request this through cloud@tech.alliancecan.ca. In your request please provide the following:

- OpenStack project name

- amount of quota required in GB

- number of shares required

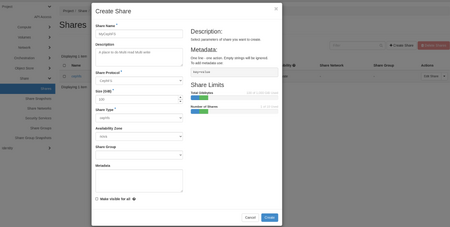

- Create the share.

- In Project --> Share --> Shares, click on +Create Share.

- Share Name = enter a name that identifies your project (e.g. project-name-shareName)

- Share Protocol = CephFS

- Size = size you need for this share

- Share Type = cephfs

- Availability Zone = nova

- Do not check Make visible for all, otherwise the share will be accessible by all users in all projects.

- Click on the Create button.

- Create an access rule to generate an access key.

- In Project --> Share --> Shares --> Actions column, select Manage Rules from the drop-down menu.

- Click on the +Add Rule button (right of page).

- Access Type = cephx

- Access Level = select read-write or read-only (you can create multiple rules for either access level if required)

- Access To = select a key name that describes the key, this name is important, it will be used in the cephfs clien config on the VM, we will use MyCephFS-RW on this page.

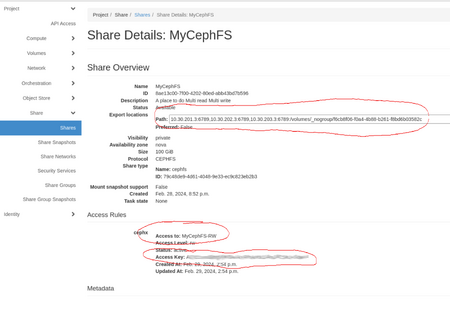

- Note the share details which you will need later.

- In Project --> Share --> Shares, click on the name of the share.

- In the Share Overview, note the three element circled in red in the "Properly configured" image: Path, wich will be used in the mount command on the VM, the Access Rules, which will be the client name and the Access Key that will let the VM's client connnect.

Attatch the CephFS network to your vm

On Arbutus

On Arbutus the cephFS network is already exposed to your VM, there is nothing to do here, go the the next section.

On SD4H/Juno

On SD4H/Juno the cephFS network, there you need to explicitely attatch the network to the VM.

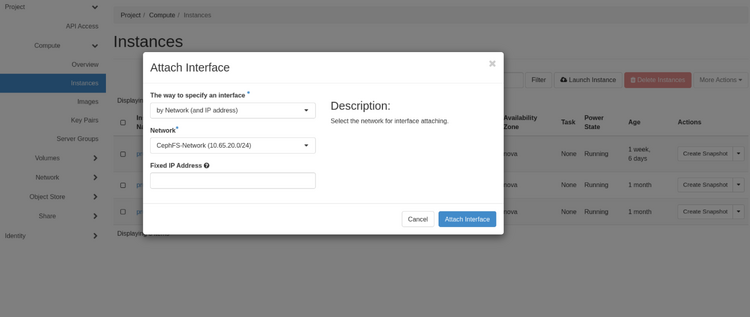

- With the Web Gui

For each Vm you need to attach, select Instance --> Action --> Attach interface select the CephFS-Network, leave the Fixed IP Address box empty.

- With the Openstack client

List the server select the id of the server you need to attach to the CephFS

$ openstack server list

+--------------------------------------+--------------+--------+-------------------------------------------+--------------------------+----------+

| ID | Name | Status | Networks | Image | Flavor |

+--------------------------------------+--------------+--------+-------------------------------------------+--------------------------+----------+

| 1b2a3c21-c1b4-42b8-9016-d96fc8406e04 | prune-dtn1 | ACTIVE | test_network=172.16.1.86, 198.168.189.3 | N/A (booted from volume) | ha4-15gb |

| 0c6df8ea-9d6a-43a9-8f8b-85eb64ca882b | prune-mgmt1 | ACTIVE | test_network=172.16.1.64 | N/A (booted from volume) | ha4-15gb |

| 2b7ebdfa-ee58-4919-bd12-647a382ec9f6 | prune-login1 | ACTIVE | test_network=172.16.1.111, 198.168.189.82 | N/A (booted from volume) | ha4-15gb |

+--------------------------------------+--------------+--------+----------------------------------------------+--------------------------+----------+

Select The ID od the VM you want to attach, will pick the first one here and run

$ openstack server add network 1b2a3c21-c1b4-42b8-9016-d96fc8406e04 CephFS-Network

$ openstack --os-cloud test server list

+--------------------------------------+--------------+--------+---------------------------------------------------------------------+--------------------------+----------+

| ID | Name | Status | Networks | Image | Flavor |

+--------------------------------------+--------------+--------+---------------------------------------------------------------------+--------------------------+----------+

| 1b2a3c21-c1b4-42b8-9016-d96fc8406e04 | prune-dtn1 | ACTIVE | CephFS-Network=10.65.20.71; test_network=172.16.1.86, 198.168.189.3 | N/A (booted from volume) | ha4-15gb |

| 0c6df8ea-9d6a-43a9-8f8b-85eb64ca882b | prune-mgmt1 | ACTIVE | test_network=172.16.1.64 | N/A (booted from volume) | ha4-15gb |

| 2b7ebdfa-ee58-4919-bd12-647a382ec9f6 | prune-login1 | ACTIVE | test_network=172.16.1.111, 198.168.189.82 | N/A (booted from volume) | ha4-15gb |

+--------------------------------------+--------------+--------+------------------------------------------------------------------------+--------------------------+----------+

We can see that the CephFS network is attached to the first VM.

VM configuration: install and configure CephFS client

- Install the required packages For Red Hat family (RHEL, CentOS, Fedora, Rocky, Alma ).

Check the available releases here https://download.ceph.com/ and look for recent rpm-* directories, quincy is the right/latest stable release at the time of this writing. The compatible distro are listed here

https://download.ceph.com/rpm-quincy/, we will show the full installation for el8.

Install relevant repositories for access to ceph client packages:

[Ceph]

name=Ceph packages for $basearch

baseurl=http://download.ceph.com/rpm-quincy/el8/$basearch

enabled=1

gpgcheck=1

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

[Ceph-noarch]

name=Ceph noarch packages

baseurl=http://download.ceph.com/rpm-quincy/el8/noarch

enabled=1

gpgcheck=1

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

[ceph-source]

name=Ceph source packages

baseurl=http://download.ceph.com/rpm-quincy/el8/SRPMS

enabled=1

gpgcheck=1

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

The epel repo also need to be in place

sudo dnf install epel-release

You can now install the ceph lib, cephfs client and other denpendencies:

sudo dnf install -y libcephfs2 python3-cephfs ceph-common python3-ceph-argparse

- Install the required packages For Debian family (Debian, Ubuntu, Mint, etc.)

You can get the repository one you have figured out your distro {codename} with lsb_release -sc

sudo apt-add-repository 'deb https://download.ceph.com/debian-quincy/ {codename} main'

Configure ceph client:

Once the client is install, you can create a ceph.conf file, note the different Mon host for the different cloud.

[global]

admin socket = /var/run/ceph/$cluster-$name-$pid.asok

client reconnect stale = true

debug client = 0/2

fuse big writes = true

mon host = 10.30.201.3:6789,10.30.202.3:6789,10.30.203.3:6789

[client]

quota = true

[global]

admin socket = /var/run/ceph/$cluster-$name-$pid.asok

client reconnect stale = true

debug client = 0/2

fuse big writes = true

mon host = 10.65.0.10:6789,10.65.0.12:6789,10.65.0.11:6789

[client]

quota = true

You can find the monitor information in the share details Path field that will be use to mount the volume. If the value from the web page is different than what is seen here, it means that the wiki page is out of date.

You aslo need to put your cient name and secret in the ceph.keyring file

[client.MyCephFS-RW]

key = <access Key>

Again, the acces key and client name (here MyCephFS-RW) are found under access rules on your project web page, hereL Project --> Share --> Shares, click on the name of the share.

- Retrieve the connection information from the share page for your connection

- Open up the share details by clicking the name of the share in the Shares page.

- Copy the entire path of the share for mounting the filesystem.

- Mount the filesystem

- Create a mount point directory somewhere in your host (

/cephfs, is used here)

mkdir /cephfs

- Via kernel mount using the ceph driver. You can do a permanent mount by adding the followin in the VM fstab

:/volumes/_nogroup/f6cb8f06-f0a4-4b88-b261-f8bd6b03582c /cephfs/ ceph name=MyCephFS-RW 0 2

:/volumes/_nogroup/f6cb8f06-f0a4-4b88-b261-f8bd6b03582c /cephfs/ ceph name=MyCephFS-RW,mds_namespace=cephfs_4_2,x-systemd.device-timeout=30,x-systemd.mount-timeout=30,noatime,_netdev,rw 0 2

Note:

There is a non-standar/funky : before the device path, it is not a typo!

The mount options are different on different systems.

The namespace option is requires for SD4H/Juno while other option are performance tweaks.

- It can also be done from the command line

sudo mount -t ceph :/volumes/_nogroup/f6cb8f06-f0a4-4b88-b261-f8bd6b03582c /cephfs/ -o name=MyCephFS-RW

sudo mount -t ceph :/volumes/_nogroup/f6cb8f06-f0a4-4b88-b261-f8bd6b03582c /cephfs/ -o name=MyCephFS-RW,mds_namespace=cephfs_4_2,x-systemd.device-timeout=30,x-systemd.mount-timeout=30,noatime,_netdev,rw

- Or via ceph-fuse if the file system needs to be mounted in user space

- No funky

:here

Install the ceph-fuse lib

sudo dnf install ceph-fuse

Let the fuse mount be accessible in userspace by uncommenting user_allow_other in the fuse.conf file

# mount_max = 1000

user_allow_other

You can now mount cephFS in a user home:

mkdir ~/my_cephfs

ceph-fuse my_cephfs/ --id=MyCephFS-RW --conf=~/ceph.conf --keyring=~/ceph.keyring --client-mountpoint=/volumes/_nogroup/f6cb8f06-f0a4-4b88-b261-f8bd6b03582c

Note that the client name is here the --id. The ceph.conf and ceph.keyring files content is exactly the same than for the ceph kernel mount.

Notes

- A particular share can have more than one user key provisioned for it.

- This allows a more granular access to the filesystem, for example if you needed some hosts to only access the filesystem in a read-only capacity.

- If you have multiple keys for a share, you can add the extra keys to your host and modify the above mounting procedure.

- This service is not available to hosts outside of the OpenStack cluster.