Multi-Instance GPU

Introduction

Many programs are unable to fully use modern GPUs such as NVidia A100 and H100. Multi-Instance GPU (MIG) is a technology that allows partitioning a single GPU into multiple instances, thus making each instance a completely independent GPU. Each GPU subdivision would then have a certain slice of the GPU computational resources and memory that is detached from the other instances by on-chip protections.

MIGs can be less wasteful and it is billed accordingly. Jobs submitted on a MIG instead of full GPU will use less of your allocated priority and you will be able to execute more of them and have lower wait time.

Which jobs should use a MIG instead of full GPUs?

Jobs that use less than half of the computing power of a GPU and less than half of the available GPU memory should be evaluated and tested on MIG. In most cases, these jobs will run just as fast on MIG and consume less than half of the computing resource.

Limitations of MIGs

MIGs do not support CUDA Inter process communications, which enables data transfers over NVLink and NVSwitch. Launching an executable on more than one MIG at a time does not improve performance and should be avoided.

Jobs that require many CPU cores per GPU may also require a full GPU instead of a MIG. The maximum number of CPU cores per MIG depends on the number of cores per full GPU and the MIG configuration and will vary from cluster to cluster.

Available MIGs configurations

The MIGs are available for now on the Narval cluster with the A100s GPUs. While there are many possible configurations for MIGs, the following configurations are currently activated on Narval:

- MIG 3g.20gb

- MIG 4g.20gb

The names describe the size of the MIG : 3g.20gb has 20GB of GPU RAM and offers 3/8 of the computing performance of a full A100 GPU. Using less powerful MIGs will have a lower impact on your allocation and priority.

The recommended maximum number of cores per MIG on Narval are :

- 3g.20gb : maximum 6 cores

- 4g.20gb : maximum 6 cores

To request a MIG of certain flavor one must include a line in your job submission script:

--gres=gpu:a100_3g.20gb:1 --gres=gpu:a100_4g.20gb:1

Examples

Request 1 MIG of power 3/8 and size 20GB for a 1 hour interactive job :

salloc --time=1:0:0 --nodes=1 --ntasks-per-node=1 --cpus-per-task=2 --gres=gpu:a100_3g.20gb:1 --mem=20gb --account=def-someuser

Request 1 MIG of power 4/8 for a 24 hour batch script using the maximum recommended number of cores and system memory

#!/bin/bash

#SBATCH --nodes=1

#SBATCH --gres=gpu:a100_4g.20gb:1

#SBATCH --ntasks=1

#SBATCH --cpus-per-task=6 # There are 6 CPU cores per 3g.20gb and 4g.20gb on Narval.

#SBATCH --mem=40gb # Request double the amount of system memory than MIG memory

#SBATCH --time=24:00:00

#SBATCH --account=def-someuser

hostname

nvidia-smi

Finding which of your jobs to migrate to using a MIG

There are currently two ways to monitor the ressource usage of a GPU job. One can find information on current and past jobs by looking at the Narval usage portal, under the Job stats tab.

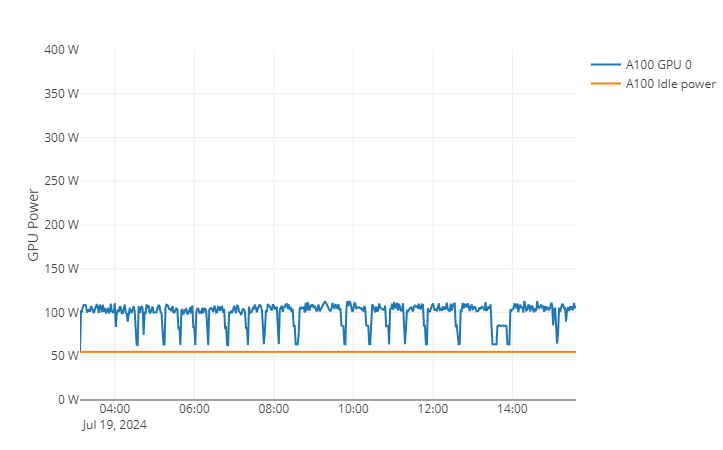

Electric power consumption are a good indicator of the total computing power requested from the GPU. For instance, the following job requested one A100 GPU with a maximum TDP of 400W, but only used 100W on average, which is only 50W more than the idle electric consumption :

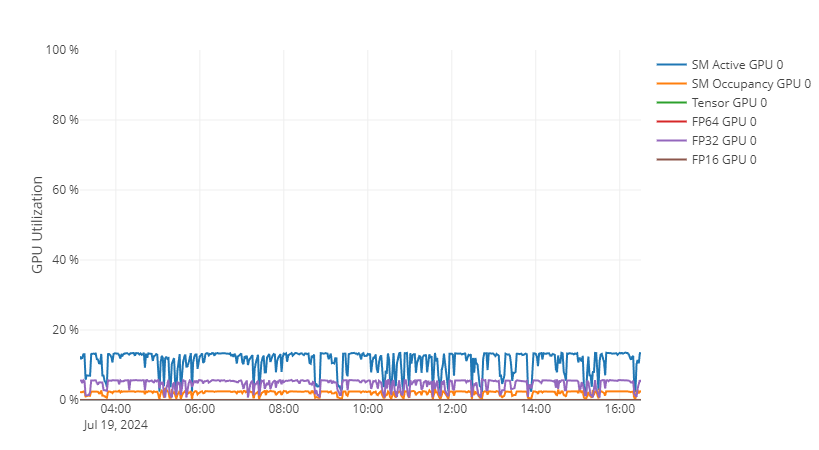

GPU functionality utilisation may also provide insights on the usage of the GPU in cases where the Electric power consumption is not sufficient. For this example job, GPU utilisation graph supports the conclusion of the GPU power consumption graph that the job use less than 25% of the available power of a full A100 GPU :

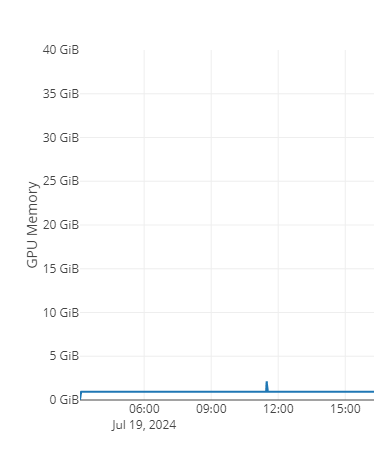

The final things to consider is the maximum amount of GPU memory and average amount of CPU cores required to run the job. For this example, the job uses a maximum of 3GB of GPU memory out of the 40GB of an A100 gpu.

It was also launched using a single CPU core. When taking into account these 3 metrics, we see that the job could easily run on a 3g.20GB or 4g.20GB MIG with power and memory to spare.

The second way to monitor the usage of a running job is by attaching to the node where the job is currently running and use nvidia-smi to read the GPU metrics in real time. This will not provide maximum and average values for memory and power of the full job, but may be helpful to troubleshoot jobs.