CUDA tutorial/fr: Difference between revisions

No edit summary |

(Updating to match new version of source page) |

||

| Line 1: | Line 1: | ||

<languages /> | <languages /> | ||

<div class="mw-translate-fuzzy"> | |||

[[Category:Software]] | [[Category:Software]] | ||

=Introduction= | =Introduction= | ||

| Line 37: | Line 38: | ||

*'''Hôte''' : désigne le CPU et la mémoire principale | *'''Hôte''' : désigne le CPU et la mémoire principale | ||

*'''Composant''' : désigne le GPU et sa mémoire | *'''Composant''' : désigne le GPU et sa mémoire | ||

</div> | |||

=What is CUDA?= | |||

'''CUDA''' = '''C'''ompute '''U'''nified '''D'''evice '''A'''rchitecture | |||

Provides access to instructions and memory of massively parallel elements in a GPU. | |||

Another definition: CUDA is a scalable parallel programming model and software environment for parallel computing. | |||

=CUDA GPU Architecture = | |||

There two main components of the GPU: | |||

* Global memory | |||

** Similar to CPU memory | |||

** Accessible by both CPU and GPU | |||

*Streaming multiprocessors (SMs) | |||

** Each SM consists or many streaming processors (SPs) | |||

**They perform actual computations | |||

**Each SM has its own control init, registers, execution pipelines, etc | |||

=CUDA programming model= | |||

Before we start talking about the programming model, let's go over some useful terminology: | |||

*Host – The CPU and its memory (host memory) | |||

*Device – The GPU and its memory (device memory) | |||

The CUDA programming model is a heterogeneous model in which both the CPU and GPU are used. | |||

CUDA code is capable of managing memory of both the CPU and the GPU as well as executing GPU functions, called kernels. Such kernels are executed by many GPU threads in parallel. Here is a five step recipe for a typical CUDA code: | |||

* Declare and allocate both the host and device memories | |||

* Initialize the host memory | |||

* Transfer data from Host memory to device memory | |||

* Execute GPU functions (kernels) | |||

* Transfer data back to the host memory | |||

<div class="mw-translate-fuzzy"> | |||

Le modèle CUDA est un modèle hétérogène qui utilise à la fois le CPU et le GPU. | Le modèle CUDA est un modèle hétérogène qui utilise à la fois le CPU et le GPU. | ||

Le code CUDA peut gérer les deux types de mémoires, la mémoire principale du CPU et la mémoire du composant GPU le code exécute aussi les fonctions du GPU appelées noyaux (''kernels''). Ces fonctions sont exécutées en parallèle par plusieurs fils GPU. Le processus comporte cinq étapes : | Le code CUDA peut gérer les deux types de mémoires, la mémoire principale du CPU et la mémoire du composant GPU le code exécute aussi les fonctions du GPU appelées noyaux (''kernels''). Ces fonctions sont exécutées en parallèle par plusieurs fils GPU. Le processus comporte cinq étapes : | ||

| Line 46: | Line 76: | ||

# retour des données à la mémoire principale | # retour des données à la mémoire principale | ||

=Modèle d'exécution= | =Modèle d'exécution= | ||

</div> | |||

= CUDA | = CUDA block-threading model = | ||

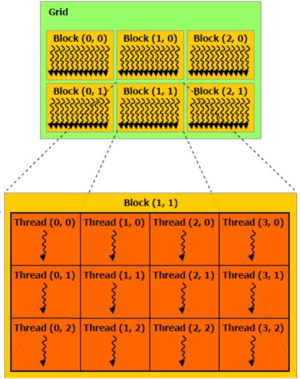

[[File:Cuda-threads-blocks.png|thumbnail|CUDA block-threading model where threads are organized into blocks while blocks are further organized into grid. ]] | [[File:Cuda-threads-blocks.png|thumbnail|CUDA block-threading model where threads are organized into blocks while blocks are further organized into grid. ]] | ||

Given very large number of threads | Given a very large number of threads - in order to achieve massive parallelism one has to use all the threads possible - in a CUDA kernel, one needs to organize them somehow. In CUDA, all the threads are structured in threading blocks, the blocks are further organized into grids, as shown in the accompanying figure. In distributing the threads we must make sure that the following conditions are satisfied: | ||

* threads within a block cooperate via the shared memory | * threads within a block cooperate via the shared memory | ||

* threads in different blocks can not cooperate | * threads in different blocks can not cooperate | ||

In this model the threads within a block work on the same set of instructions (but perhaps with different data sets) and exchange data between each other via shared memory. Threads in other blocks do the same thing (see | In this model the threads within a block work on the same set of instructions (but perhaps with different data sets) and exchange data between each other via shared memory. Threads in other blocks do the same thing (see the figure). | ||

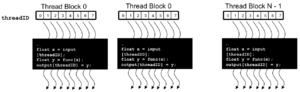

[[File:Cuda_threads.png|thumbnail|Threads within a block intercommunicate via shared memory . ]] | [[File:Cuda_threads.png|thumbnail|Threads within a block intercommunicate via shared memory. ]] | ||

Each thread uses IDs to decide what data to work on: | Each thread uses IDs to decide what data to work on: | ||

* Block IDs: 1D or 2D (blockIdx.x, blockIdx.y) | * Block IDs: 1D or 2D (blockIdx.x, blockIdx.y) | ||

* Thread IDs: 1D, 2D, or 3D (threadIdx.x, threadIdx.y, threadIdx.z) | * Thread IDs: 1D, 2D, or 3D (threadIdx.x, threadIdx.y, threadIdx.z) | ||

Such model simplifies memory addressing when processing | Such a model simplifies memory addressing when processing multi-dimensional data. | ||

= | = Thread scheduling = | ||

Usually streaming microprocessor (SM) executes one threading block at a time. The code is executed in groups of 32 threads (called | Usually a streaming microprocessor (SM) executes one threading block at a time. The code is executed in groups of 32 threads (called warps). A hardware scheduller is free to assign blocks to any SM at any time. Furthermore, when an SM gets the block assigned to it, it does not mean that this particular block will be executed non-stop. In fact, the scheduler can postpone/suspend execution of such blocks under certain conditions when e.g. data becomes unavailable (indeed, it is quite time-consuming to read data from the global GPU memory). When it happens, the scheduler executes another threading block which is ready for execution. This is a so-called zero-overhead scheduling which makes the execution more streamlined so that SMs are not idle. | ||

= GPU | = Types of GPU memory in CUDA = | ||

There are several | There are several types of memories available for CUDA operations: | ||

* Global memory | * Global memory | ||

** off-chip, good for I/O, but relatively slow | ** off-chip, good for I/O, but relatively slow | ||

* Shared memory | * Shared memory | ||

** on-chip, good for thread collaboration, very fast | ** on-chip, good for thread collaboration, very fast | ||

* Registers | * Registers and Local Memory | ||

** thread work space , very fast | ** thread work space , very fast | ||

* Constant memory | * Constant memory | ||

= | = A few basic CUDA operations = | ||

== CUDA | == CUDA memory allocation == | ||

* cudaMalloc((void**)&array, size) | * cudaMalloc((void**)&array, size) | ||

** Allocates object in the device memory. Requires address of a pointer of allocated array and size. | ** Allocates object in the device memory. Requires address of a pointer of allocated array and size. | ||

| Line 81: | Line 112: | ||

** Deallocates object from the memory. Requires just a pointer to the array. | ** Deallocates object from the memory. Requires just a pointer to the array. | ||

== CUDA | == CUDA data transfer == | ||

* cudaMemcpy(array_dest, array_orig, size, direction) | * cudaMemcpy(array_dest, array_orig, size, direction) | ||

** Copy the data from either device to host or host to device . Requires pointers to the arrays, size and the direction type (cudaMemcpyHostToDevice, cudaMemcpyDeviceToHost, cudaMemcpyDeviceToDevice, etc) | ** Copy the data from either device to host or host to device. Requires pointers to the arrays, size and the direction type (cudaMemcpyHostToDevice, cudaMemcpyDeviceToHost, cudaMemcpyDeviceToDevice, etc.) | ||

* cudaMemcpyAsync | * cudaMemcpyAsync | ||

** Same as cudaMemcpy, but transfers the data asynchronously which means it' | ** Same as cudaMemcpy, but transfers the data asynchronously which means it doesn't block the execution of other processes. | ||

= | = A simple CUDA C program= | ||

The following example shows how to add two numbers on the GPU using CUDA. Note that this is just an exercise, it's very simple, so it will not scale | The following example shows how to add two numbers on the GPU using CUDA. Note that this is just an exercise, it's very simple, so it will not scale at all. | ||

<syntaxhighlight lang="cpp" line highlight="1,5"> | <syntaxhighlight lang="cpp" line highlight="1,5"> | ||

__global__ void add (int *a, int *b, int *c){ | __global__ void add (int *a, int *b, int *c){ | ||

| Line 120: | Line 151: | ||

Are we missing anything ? | Are we missing anything ? | ||

That code does not look parallel ! | That code does not look parallel! | ||

Solution: | Solution: Let's look at what's inside the triple brackets in the kernel call and make some changes : | ||

<syntaxhighlight lang="cpp" line highlight="1,5"> | <syntaxhighlight lang="cpp" line highlight="1,5"> | ||

add <<< N, 1 >>> (dev_a, dev_b, dev_c); | add <<< N, 1 >>> (dev_a, dev_b, dev_c); | ||

</syntaxhighlight> | </syntaxhighlight> | ||

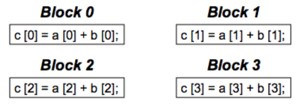

Here we replaced 1 by N, so that N different | Here we replaced 1 by N, so that N different CUDA blocks will be executed at the same time. However, in order to achieve parallelism we need to make some changes to the kernel as well: | ||

<syntaxhighlight lang="cpp" line highlight="1,5"> | <syntaxhighlight lang="cpp" line highlight="1,5"> | ||

__global__ void add (int *a, int *b, int *c){ | __global__ void add (int *a, int *b, int *c){ | ||

| Line 131: | Line 162: | ||

c[blockIdx.x] = a[blockIdx.x] + b[blockIdx.x]; | c[blockIdx.x] = a[blockIdx.x] + b[blockIdx.x]; | ||

</syntaxhighlight> | </syntaxhighlight> | ||

where blockIdx.x is the unique number identifying a | where blockIdx.x is the unique number identifying a CUDA block. This way each CUDA block adds a value from a[ ] to b[ ]. | ||

[[File:Cuda-blocks-parallel.png|thumbnail|CUDA blocks-based parallelism. ]] | [[File:Cuda-blocks-parallel.png|thumbnail|CUDA blocks-based parallelism. ]] | ||

| Line 138: | Line 169: | ||

add <<< 1, '''N''' >>> (dev_a, dev_b, dev_c); | add <<< 1, '''N''' >>> (dev_a, dev_b, dev_c); | ||

</syntaxhighlight> | </syntaxhighlight> | ||

Now instead of blocks, the job is distributed across parallel threads. What is the advantage of having parallel threads ? Unlike blocks, threads can communicate between each other: in other words, we parallelize across multiple threads in the block when heavy communication is involved. The chunks of code that can run independently, i.e. with little or no communication, are distributed across parallel blocks. | |||

= | = Advantages of shared memory= | ||

So far all the memory transfers in the kernel have been done via the regular GPU (global) memory which is relatively slow. Often | So far all the memory transfers in the kernel have been done via the regular GPU (global) memory which is relatively slow. Often we have so many communications between the threads that the performance decreases significantly. In order to address this issue there exists another type of memory called '''shared memory''' which can be used to speed-up the memory operations between the threads. However the trick is that only the threads within a block can communicate. In order to demonstrate the usage of such shared memory we consider the dot product example where two vectors are multiplied together element by element and then summed. Below is the kernel: | ||

<syntaxhighlight lang="cpp" line highlight="1,5"> | <syntaxhighlight lang="cpp" line highlight="1,5"> | ||

__global__ void dot(int *a, int *b, int *c){ | __global__ void dot(int *a, int *b, int *c){ | ||

| Line 147: | Line 178: | ||

} | } | ||

</syntaxhighlight> | </syntaxhighlight> | ||

After each thread | After each thread computes its portion, we need to add everything together: each thread has to share its data. However, the problem is that each copy of thread's temp variable is private. This can be resolved by the use of shared memory. Below is the kernel with the modifications to use shared memory: | ||

<syntaxhighlight lang="cpp" line highlight="1,4"> | <syntaxhighlight lang="cpp" line highlight="1,4"> | ||

#define N 512 | #define N 512 | ||

| Line 159: | Line 190: | ||

} | } | ||

</syntaxhighlight> | </syntaxhighlight> | ||

= Basic | |||

== Memory | = Basic performance considerations = | ||

== Memory transfers == | |||

* PCI-e is extremely slow (4-6 GB/s) compared to both host and device memories | * PCI-e is extremely slow (4-6 GB/s) compared to both host and device memories | ||

* Minimize | * Minimize host-to-device and device-to-host memory copies | ||

* Keep data on the device as long as possible | * Keep data on the device as long as possible | ||

* Sometimes it is not effificient to make the | * Sometimes it is not effificient to make the host (CPU) do non-optimal jobs; executing it on the GPU may still be faster than copying to CPU, executing, and copying back | ||

* Use memcpy times to analyse the execution times | * Use memcpy times to analyse the execution times | ||

== Bandwidth == | == Bandwidth == | ||

* Always keep CUDA bandwidth in mind when | * Always keep CUDA bandwidth limitations in mind when changing your code | ||

* Know the theoretical peak bandwidth of the various data links | * Know the theoretical peak bandwidth of the various data links | ||

* Count bytes read/written and compare to the theoretical peak | * Count bytes read/written and compare to the theoretical peak | ||

* Utilize the various memory spaces depending on the situation: global, shared, constant | * Utilize the various memory spaces depending on the situation: global, shared, constant | ||

== Common GPU | == Common GPU programming strategies == | ||

* Constant memory also resides in DRAM- much slower access than shared memory | * Constant memory also resides in DRAM - much slower access than shared memory | ||

** BUT, it’s cached !!! | ** BUT, it’s cached !!! | ||

** highly efficient access for read-only, broadcast | ** highly efficient access for read-only, broadcast | ||

* Carefully divide data acording to access patterns: | * Carefully divide data acording to access patterns: | ||

** | ** read-only: constant memory (very fast if in cache) | ||

** | ** read/write within block: shared memory (very fast) | ||

** | ** read/write within thread: registers (very fast) | ||

** | ** read/write input/results: global memory (very slow) | ||

Revision as of 18:45, 28 September 2017

Introduction

Dans ce tutoriel, nous présentons le composant de calcul hautement parallèle qu'est le processeur graphique (ou GPU pour graphics processing unit) et nous abordons le modèle CUDA avec quelques-unes de ses librairies numériques utilisées en calcul de haute performance.

Ce tutoriel montre comment utiliser CUDA pour accélérer des programmes en C ou en C++. Une bonne connaissance d'un de ces langages vous permettra d’en tirer le meilleur profit. Si CUDA sert aussi aux programmes en Fortran, nous nous limiterons ici à CUDA pour C/C++ et utiliserons le terme CUDA C. Il s'agit essentiellement de produire des fonctions en C/C++ pouvant être exécutées par les CPUs et les GPUs.

- Comprendre l'architecture d'un GPU;

- Comprendre le déroulement d'un programme CUDA;

- Comprendre et gérer les différents types de mémoires GPU;

- Écrire et compiler un exemple de code CUDA.

Qu'est-ce qu'un GPU?

Un GPU (pour graphics processing unit), est un processeur monopuce capable d'effectuer des calculs mathématiques rapidement pour produire des rendus d'images. Depuis quelques années, la puissance du GPU sert aussi à accélérer l'exécution de calculs intensifs dans plusieurs domaines de la recherche scientifique de pointe.

Qu'est-ce que CUDA?

CUDA est l'abréviation de Compute Unified Device Architecture. Il s'agit d'un environnement logiciel flexible et d'un modèle de programmation pour le traitement de calculs parallèles intensifs.

Architecture du GPU

Un GPU comporte deux types d'éléments principaux :

- la mémoire globale

- est semblable à la mémoire du CPU

- est accessible par un CPU et un GPU

- des multiprocesseurs en continu (SM pour streaming multiprocessor)

- chaque SM est formé de plusieurs processeurs en continu (SP pour streaming processor)

- qui effectuent les calculs

- chaque SM est doté d'une unité de contrôle, de registres, de pipelines d'exécution, etc. qui sui sont propres

Modèle de programmation

Voyons d'abord quelques termes importants :

- Hôte : désigne le CPU et la mémoire principale

- Composant : désigne le GPU et sa mémoire

What is CUDA?

CUDA = Compute Unified Device Architecture Provides access to instructions and memory of massively parallel elements in a GPU. Another definition: CUDA is a scalable parallel programming model and software environment for parallel computing.

CUDA GPU Architecture

There two main components of the GPU:

- Global memory

- Similar to CPU memory

- Accessible by both CPU and GPU

- Streaming multiprocessors (SMs)

- Each SM consists or many streaming processors (SPs)

- They perform actual computations

- Each SM has its own control init, registers, execution pipelines, etc

CUDA programming model

Before we start talking about the programming model, let's go over some useful terminology:

- Host – The CPU and its memory (host memory)

- Device – The GPU and its memory (device memory)

The CUDA programming model is a heterogeneous model in which both the CPU and GPU are used. CUDA code is capable of managing memory of both the CPU and the GPU as well as executing GPU functions, called kernels. Such kernels are executed by many GPU threads in parallel. Here is a five step recipe for a typical CUDA code:

- Declare and allocate both the host and device memories

- Initialize the host memory

- Transfer data from Host memory to device memory

- Execute GPU functions (kernels)

- Transfer data back to the host memory

Le modèle CUDA est un modèle hétérogène qui utilise à la fois le CPU et le GPU. Le code CUDA peut gérer les deux types de mémoires, la mémoire principale du CPU et la mémoire du composant GPU le code exécute aussi les fonctions du GPU appelées noyaux (kernels). Ces fonctions sont exécutées en parallèle par plusieurs fils GPU. Le processus comporte cinq étapes :

- déclaration et allocation de la mémoire principale et de la mémoire du composant

- initialisation de la mémoire principale

- transfert des données de la mémoire principale à la mémoire du composant

- exécution des fonctions GPU (noyaux)

- retour des données à la mémoire principale

Modèle d'exécution

CUDA block-threading model

Given a very large number of threads - in order to achieve massive parallelism one has to use all the threads possible - in a CUDA kernel, one needs to organize them somehow. In CUDA, all the threads are structured in threading blocks, the blocks are further organized into grids, as shown in the accompanying figure. In distributing the threads we must make sure that the following conditions are satisfied:

- threads within a block cooperate via the shared memory

- threads in different blocks can not cooperate

In this model the threads within a block work on the same set of instructions (but perhaps with different data sets) and exchange data between each other via shared memory. Threads in other blocks do the same thing (see the figure).

Each thread uses IDs to decide what data to work on:

- Block IDs: 1D or 2D (blockIdx.x, blockIdx.y)

- Thread IDs: 1D, 2D, or 3D (threadIdx.x, threadIdx.y, threadIdx.z)

Such a model simplifies memory addressing when processing multi-dimensional data.

Thread scheduling

Usually a streaming microprocessor (SM) executes one threading block at a time. The code is executed in groups of 32 threads (called warps). A hardware scheduller is free to assign blocks to any SM at any time. Furthermore, when an SM gets the block assigned to it, it does not mean that this particular block will be executed non-stop. In fact, the scheduler can postpone/suspend execution of such blocks under certain conditions when e.g. data becomes unavailable (indeed, it is quite time-consuming to read data from the global GPU memory). When it happens, the scheduler executes another threading block which is ready for execution. This is a so-called zero-overhead scheduling which makes the execution more streamlined so that SMs are not idle.

Types of GPU memory in CUDA

There are several types of memories available for CUDA operations:

- Global memory

- off-chip, good for I/O, but relatively slow

- Shared memory

- on-chip, good for thread collaboration, very fast

- Registers and Local Memory

- thread work space , very fast

- Constant memory

A few basic CUDA operations

CUDA memory allocation

- cudaMalloc((void**)&array, size)

- Allocates object in the device memory. Requires address of a pointer of allocated array and size.

- cudaFree(array)

- Deallocates object from the memory. Requires just a pointer to the array.

CUDA data transfer

- cudaMemcpy(array_dest, array_orig, size, direction)

- Copy the data from either device to host or host to device. Requires pointers to the arrays, size and the direction type (cudaMemcpyHostToDevice, cudaMemcpyDeviceToHost, cudaMemcpyDeviceToDevice, etc.)

- cudaMemcpyAsync

- Same as cudaMemcpy, but transfers the data asynchronously which means it doesn't block the execution of other processes.

A simple CUDA C program

The following example shows how to add two numbers on the GPU using CUDA. Note that this is just an exercise, it's very simple, so it will not scale at all.

__global__ void add (int *a, int *b, int *c){

*c = *a + *b;

}

int main(void){

int a, b, c;

int *dev_a, *dev_b, *dev_c;

int size = sizeof(int);

// allocate device copies of a,b, c

cudaMalloc ( (void**) &dev_a, size);

cudaMalloc ( (void**) &dev_b, size);

cudaMalloc ( (void**) &dev_c, size);

a=2; b=7;

// copy inputs to device

cudaMemcpy (dev_a, &a, size, cudaMemcpyHostToDevice);

cudaMemcpy (dev_b, &b, size, cudaMemcpyHostToDevice);

// launch add() kernel on GPU, passing parameters

add <<< 1, 1 >>> (dev_a, dev_b, dev_c);

// copy device result back to host

cudaMemcpy (&c, dev_c, size, cudaMemcpyDeviceToHost);

cudaFree ( dev_a ); cudaFree ( dev_b ); cudaFree ( dev_c );

}

Are we missing anything ? That code does not look parallel! Solution: Let's look at what's inside the triple brackets in the kernel call and make some changes :

add <<< N, 1 >>> (dev_a, dev_b, dev_c);

Here we replaced 1 by N, so that N different CUDA blocks will be executed at the same time. However, in order to achieve parallelism we need to make some changes to the kernel as well:

__global__ void add (int *a, int *b, int *c){

c[blockIdx.x] = a[blockIdx.x] + b[blockIdx.x];

where blockIdx.x is the unique number identifying a CUDA block. This way each CUDA block adds a value from a[ ] to b[ ].

Can we again make some modifications in those triple brackets ?

add <<< 1, '''N''' >>> (dev_a, dev_b, dev_c);

Now instead of blocks, the job is distributed across parallel threads. What is the advantage of having parallel threads ? Unlike blocks, threads can communicate between each other: in other words, we parallelize across multiple threads in the block when heavy communication is involved. The chunks of code that can run independently, i.e. with little or no communication, are distributed across parallel blocks.

So far all the memory transfers in the kernel have been done via the regular GPU (global) memory which is relatively slow. Often we have so many communications between the threads that the performance decreases significantly. In order to address this issue there exists another type of memory called shared memory which can be used to speed-up the memory operations between the threads. However the trick is that only the threads within a block can communicate. In order to demonstrate the usage of such shared memory we consider the dot product example where two vectors are multiplied together element by element and then summed. Below is the kernel:

__global__ void dot(int *a, int *b, int *c){

int temp = a[threadIdx.x]*b[threadIdx.x];

}

After each thread computes its portion, we need to add everything together: each thread has to share its data. However, the problem is that each copy of thread's temp variable is private. This can be resolved by the use of shared memory. Below is the kernel with the modifications to use shared memory:

#define N 512

__global__ void dot(int *a, int *b, int *c){

__shared__ int temp[N];

temp[threadIdx.x] = a[threadIdx.x]*b[threadIdx.x];

__syncthreads();

if(threadIdx.x==0){

int sum; for(int i=0;i<N;i++) sum+= temp[i];

*c=sum; }

}

Basic performance considerations

Memory transfers

- PCI-e is extremely slow (4-6 GB/s) compared to both host and device memories

- Minimize host-to-device and device-to-host memory copies

- Keep data on the device as long as possible

- Sometimes it is not effificient to make the host (CPU) do non-optimal jobs; executing it on the GPU may still be faster than copying to CPU, executing, and copying back

- Use memcpy times to analyse the execution times

Bandwidth

- Always keep CUDA bandwidth limitations in mind when changing your code

- Know the theoretical peak bandwidth of the various data links

- Count bytes read/written and compare to the theoretical peak

- Utilize the various memory spaces depending on the situation: global, shared, constant

Common GPU programming strategies

- Constant memory also resides in DRAM - much slower access than shared memory

- BUT, it’s cached !!!

- highly efficient access for read-only, broadcast

- Carefully divide data acording to access patterns:

- read-only: constant memory (very fast if in cache)

- read/write within block: shared memory (very fast)

- read/write within thread: registers (very fast)

- read/write input/results: global memory (very slow)